She was the founding director of Technology and Society for the Anti-Defamation League. If platforms want to protect their users from hateful content while ensuring that vital political expression isn’t affected, they should make hate speech policies that are informed by this reality.īrittan Heller is counsel at Foley Hoag and a technology and human rights fellow at the Harvard Kennedy School. If common usage can change a comic strip into a hate symbol, then authentic engagement can similarly transform its meaning.

BIG GAY MEME FROG OFFLINE

Platforms need to evaluate when it is more likely that content on social media will result in offline consequences - especially in risky situations like political unrest and around elections - and then quickly reallocate more of their staff members, time and attention to monitor trends and challenges in related content and respond quickly. Human rights impact assessments are common best practices in corporate social responsibility, but this is not yet a regular activity for most tech companies, especially in the context of hate speech and its impacts. Third, companies should be responsive to shifting memes, especially in volatile contexts. The public does not know if companies revisit their enforcement calculus and, more important, the contours of how that might occur. They do not state how context shifts affect their operations. Tech company terms of service don’t explain how, in practice, they decide if nonhistorical content is hateful or no longer hateful.

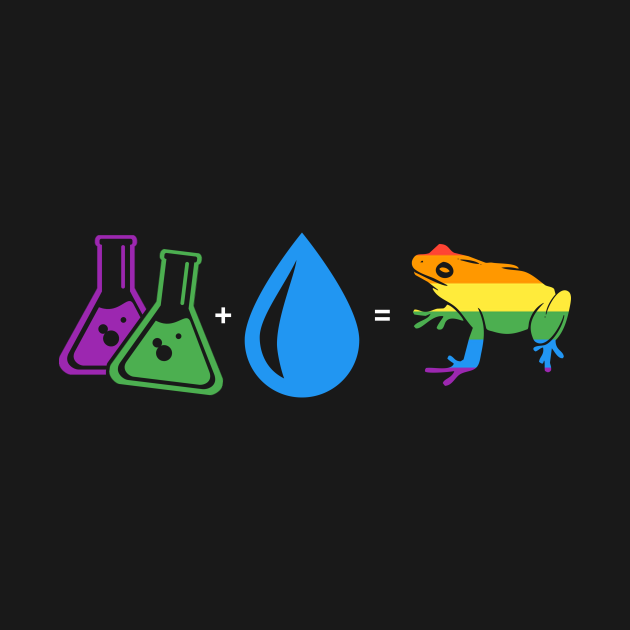

While platforms have been doing better at making broader commitments against hate, like Facebook’s recent announcement to take a wider view of hate speech and extremist-related content, what’s missing is the ground-floor view. So what can platforms do? First, they can fill the public information vacuum around hate speech policy. If Google, Twitter and Facebook had built in photo recognition to take down all images of Pepe the Frog, the movement might have been robbed of a critical rallying cry. “Symbols and colors that mean something in one culture can mean something completely different in another culture,” a protester told The Times, “so I think if Americans are really offended by this, we should explain to them what it means to us.” Most seem to understand the frog to be a symbol of youth and were unaware of his link to the alt-right. Local activists in Hong Kong transformed Pepe into an emoji on encrypted platforms, dressed as a protester or a journalist. Those who call on the companies to take steps to stem the tide of xenophobia, racism and the targeting of minorities that we’re seeing around the world should keep this in mind as well. Pepe, Gaysper and other once-hateful symbols teach us that tech companies should institutionalize impermanence - they should build their policies to continually adapt to the changing world. Cunning users termed him Gaysper, and as the image went viral, he transformed from intended insult to a stance against hatred via mockery and humor.

BIG GAY MEME FROG ANDROID

Aragorn was facing down orcs, who sported symbols for feminism, communism, media outlets - and oddly, an Android ghost emoji striped with rainbow colors. In April, the Spanish political party Vox tweeted a meme referencing “The Lord of the Rings”: a picture of Aragorn, digitally manipulated to include the party logo and a Spanish flag. But today, thanks to meme culture, online audiences can flip hate speech on its head faster than ever. It provides a real-time demonstration of how hate speech can be defanged, based on shifting circumstances, expanded frames of reference and varied common usage. Though exactly the same in appearance, the Hong Kong version of Pepe is a different frog. To manage it, platforms must constantly re-evaluate hateful symbols and communicate with users about their decision-making.įew examples illustrate this need better than the long, strange journey of Pepe the Frog, the crudely drawn comic-book amphibian that originated as a mascot for slackers was repeatedly altered by white supremacists for racist, homophobic and anti-Semitic memes was classified by the Anti-Defamation League as a hate symbol in 2016 and was repurposed this summer and fall by protesters in Hong Kong to promote a pro-democracy message that had nothing to do with white supremacy or terrorism.

Hate speech is fluid, dependent on cultural context and social meaning. The idea that platforms like Twitter, Facebook and Instagram should remove hate speech is relatively uncontroversial.